Honestly, the idea of hopping into a car, pressing a button, and just chilling while your ride does all the hard work still feels like sci-fi sometimes. But here we are, with Tesla, Waymo, Cruise, and a bunch of other companies throwing these self-driving cars at us like it’s some cool new app update. People on Twitter and Reddit are going nuts with opinions — some are super hyped like “my commute just became a Netflix binge,” while others are panicking about robots taking over the roads and killing kittens or whatever. I mean, I get it, it’s a lot to wrap your head around.

When I first saw one in action a few months back, it was like staring at a magic trick. The car smoothly switched lanes, avoided some guy texting while riding a bike, and then politely stopped at a red light without anyone touching the steering wheel. I couldn’t help but think, “Wow, humans might actually be obsolete behind the wheel.” But then I remembered that even the best human drivers have fender benders, so maybe cars that never get road rage are a good thing.

Not as easy as it looks

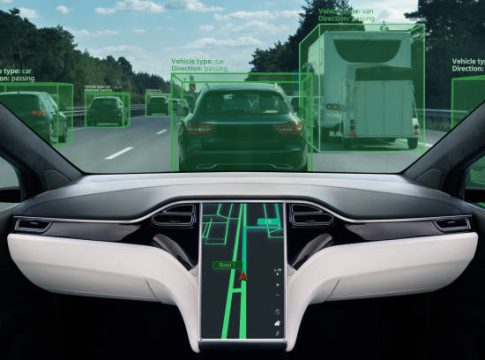

Here’s the thing — making a car drive itself isn’t just a matter of plugging in a chip and calling it a day. There’s a ton of tech going on behind the scenes. LIDAR sensors, radar, fancy cameras, and neural networks trying to guess what every pedestrian, dog, or squirrel is gonna do next. And don’t get me started on bad weather. Rain? Snow? Fog? Suddenly that smooth robot ride can turn into a glitchy nightmare.

Some people like to assume self-driving cars will immediately make traffic disappear. I wish. If anything, we’ll probably get a weird transition phase where humans and robots fight for lane space. Imagine a self-driving car trying to predict that one guy who always drifts into your lane because he thinks he’s Vin Diesel. Yeah, the AI has a lot of learning to do before that works perfectly.

The scary part — ethics and responsibility

There’s this weird debate online about “who’s responsible if a self-driving car crashes?” Most people assume the company, but in reality, the laws are behind the tech by a mile. I mean, right now, if you hit a tree because you were texting, it’s on you. But if the car hits a tree because it misread a shadow, suddenly lawyers are having panic attacks. People on LinkedIn have long threads about this, filled with tech jargon and lawyer-level anxiety. And while it sounds boring, it’s actually kind of terrifying.

And then there’s the ethical stuff. Imagine the classic trolley problem but with a car. AI has to decide between hitting a cyclist or swerving into a wall with passengers. Humans can make split-second moral calls, but can a car? Some engineers say yes, some say no, and honestly, I’m still waiting for a car that can even handle a roundabout without panicking.

Money and infrastructure — the silent hurdle

Everybody talks about the “cool factor,” but barely anyone mentions the cost. These self-driving systems are expensive — like, ridiculously expensive. Most people won’t be able to just buy one next year. Plus, our roads weren’t built for robots. Lane markings are faded, construction zones are messy, and traffic lights sometimes go rogue. Until cities upgrade their infrastructure, these cars will basically be like trying to play VR on a potato laptop — possible, but messy.

Then there’s insurance. Good luck figuring out premiums when the car might make mistakes but less often than humans. Insurers are trying to adapt, but it’s a wild ride. People online joke that your self-driving car might cost more to insure than your house — and honestly, that’s not that far off in some scenarios.

Cultural shift — people just aren’t ready

Even if the tech and laws magically sorted themselves overnight, there’s still the human factor. Some people won’t ever trust a car without hands on the wheel. I’ve met folks who freak out if cruise control is on, and now we’re talking full robot driving. It’s a mindset shift that might take decades. On the flip side, younger generations who grew up with smartphones and Uber are way more open. They see cars as a service, not a status symbol or an extension of their ego.

Social media is already buzzing with memes about “AI stealing our driving jobs” — which is hilarious, but also a tiny bit true. Truck drivers, taxi drivers, delivery folks — their world is about to change drastically. Some of them are preparing, learning tech skills, while others are, well, panicking.

The halfway point

Right now, most of us are stuck in Level 2 or 3 autonomy — fancy cruise control, lane assist, autopilot that still needs human eyes on the road. It’s helpful, yes, but still far from full self-driving. And that’s probably a good thing. Because if people thought they could just nap while the car drove, you’d have chaos. And honestly, a little human error makes life interesting. Would you really want every commute completely risk-free? It might get boring.

At the end of the day, I think self-driving cars are coming, whether we’re ready or not. But it’s going to be a messy, slow, slightly terrifying ride. Some cities and countries will embrace it, others will resist. Some people will love it, others will sue over it. And me? I’ll probably try it once, then keep one hand on the wheel for reassurance, because old habits die hard.

Meta Description:

Explore the rise of self-driving cars, the tech behind them, ethical questions, and why humans might not be ready for fully autonomous roads yet. A casual look at our future on autopilot.